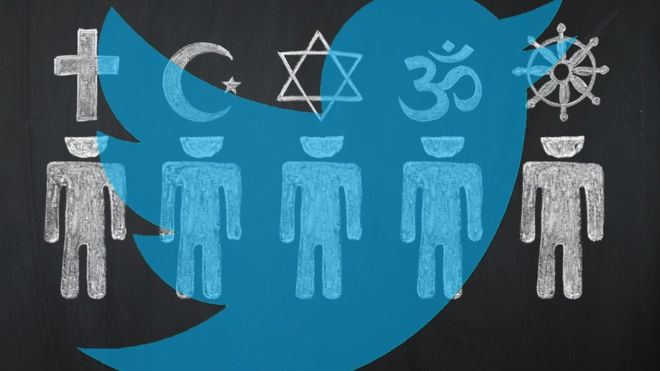

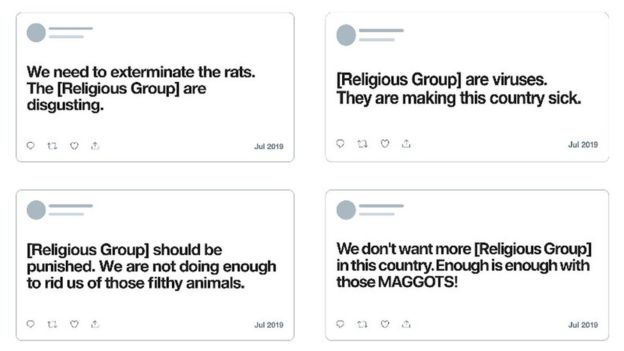

Twitter is updating its hate-speech rules to ban posts that liken religious groups to rats, viruses or maggots, among other dehumanising terms.

Over time, the ban would be extended to cover to some other groups, it said. But a public consultation had indicated users still wished to use dehumanising language to criticise political organisations and hate groups.

Tech companies have struggled to strike a balance between free expression and protecting users from attack.

According to BBC, Twitter said it had taken “months of conversations” to decide on the policy. “Our primary focus is on addressing the risks of offline harm – and research shows that dehumanising language increases that risk,” the company said in a blog.

Twitter’s hateful conduct policy had already banned users from spreading scaremongering stereotypes about religious groups – such as claiming all adherents were terrorists. In addition, it had prohibited the use of imagery that might stir up hatred, including photos edited to give individuals animal-like features or add “hateful symbols”.

Twitter said it would respond to user reports as well as employ machine-learning tools to automatically flag suspect posts for review by human moderators. Offenders face having their accounts suspended, although not for cases that occurred before the rule came into effect.

Twitter’s action comes a fortnight after it said it would start hiding tweets by world leaders and politicians that broke its rules behind a warning notice. Prior to this, it had made an exception for them.

More recently, as reported by The Sauce, Facebook’s Instagram has taken steps of its own to discourage bullying, asking users: “Are you sure you want to post this?” if it determines a message to be abusive.